Trusted by the World's Best

More Then 150+ Brands

15+

AI Innovations Successfully Implemented

14+

Years of Proven Industry Leadership

30+

Nations Reached Worldwide

100%

Dedicated to Responsible AI Standards

Maximize Efficiency and Scale Rapidly with Custom Generative AI Development

Generative AI has evolved into a central force driving enterprise innovation and competitive differentiation. As organizations integrate it into core operations, they are transforming workflow automation, decision intelligence, and global customer engagement.

Powered by advanced LLMs and multimodal architectures, modern generative systems automate complex cognitive tasks, convert vast data into strategic insights, and generate high-quality digital assets instantly.

At Cqlsys, we move beyond experimentation to deliver enterprise-grade Generative AI solutions—combining strategic alignment, model customization, secure deployment, and continuous optimization to ensure scalable, measurable business impact.

Our Suite of Specialized Generative AI & Machine

Learning Services

The pace of AI evolution is relentless. At Cqlsys, we capture this technological

velocity and anchor it into practical, high-impact enterprise solutions. As your

strategic innovation partner, we dismantle legacy operational hurdles and replace

them with high-velocity, future-proof architectures designed to scale alongside

your business.

- Predictive Analytics for Strategic Decisions: Transition from reactive management to proactive leadership. We build models that synthesize historical data to forecast market shifts, demand fluctuations, and financial outcomes.

- Intelligent Recommendation Engines: Boost user lifetime value by deploying discovery systems that analyze behavioral nuances to suggest products or content with pinpoint accuracy.

- Real-Time Sentiment Analysis: Instantly gauge the public's pulse. Our tools monitor digital conversations to provide an immediate understanding of brand perception and customer satisfaction.

- Advanced Conversational AI: Replace rigid bots with sophisticated LLM-based agents capable of handling complex, multi-turn dialogues that feel authentically human.

- Smart Customer Service Automation: Streamline your support ecosystem with end-to-end automation that categorizes, routes, and resolves inquiries without manual intervention.

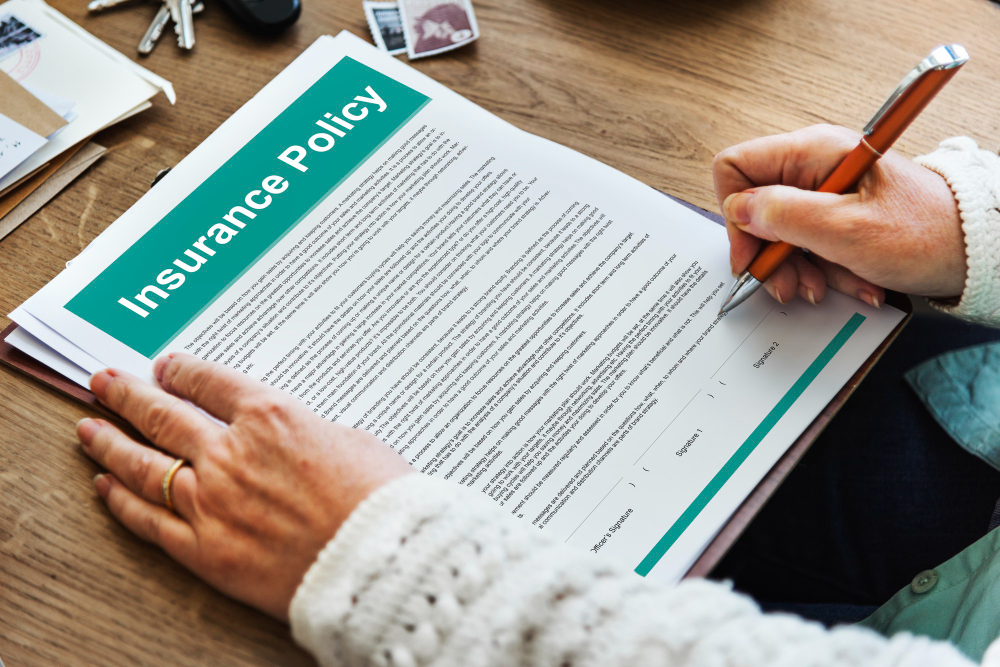

- Document Intelligence & OCR Solutions: Turn stagnant paperwork into active data. Our high-precision OCR systems extract and validate information from invoices, contracts, and IDs in seconds.

- AI-Powered Object Detection: Enhance security and operational awareness with vision systems that identify, track, and categorize assets in real-time environments.

- AI-Powered Visual Inspection: Standardize quality across manufacturing lines. Our AI detects sub-millimeter defects and inconsistencies that the human eye might miss.

- Fraud Detection & Risk Intelligence: Safeguard your enterprise with neural networks that identify anomalous transactional patterns and mitigate financial threats instantly.

- AI for Smart Logistics & Supply Chain: Optimize global operations with intelligent routing and inventory management that adapts to weather, traffic, and geopolitical variables.

- AI in Healthcare & Diagnostics: Support clinical decision-making with deep-learning models trained for early-stage disease detection and medical imaging analysis.

- AI for IoT & Edge Devices: Bring intelligence directly to the hardware. We deploy optimized models for local, low-latency processing on sensors and smart devices.

- AI for Blockchain & Crypto: Secure decentralized ecosystems by applying AI to smart contract auditing and the detection of sophisticated on-chain security threats.

Our object detection solutions leverage advanced computer vision models to identify and locate objects in images or video streams. Whether it’s counting products on a conveyor belt, detecting vehicles in traffic, or guiding autonomous systems, our solutions deliver fast, reliable, and scalable performance. By automating object identification, you gain deeper visibility, faster decision-making, and greater operational efficiency.

Our custom visual inspection solutions are built to identify surface defects, assembly errors, or quality deviations that human eyes may miss. Using deep learning and image processing, these systems ensure every product meets your quality standards while reducing manual inspection time and cost. From manufacturing lines to safety monitoring, we help you maintain consistent product quality and operational excellence.

We help businesses provide more personalized customer support through AI-first automation. Our smart virtual assistants understand human language and integrate easily with your CRM and live chat platforms.

Protect revenue with AI-powered fraud detection systems that spot threats in real time. Our AI models use pattern recognition and behavior-based scoring to flag suspicious activity across finance, crypto, and eCommerce platforms.

As a best AI development company in India and USA, we automate document-heavy workflows with AI-powered tools that extract, classify, and summarize information at scale.

Optimize logistics and reduce operational costs with our AI-driven planning and automation. Our enterprise AI development company brings intelligence to every step of your logistics workflow.

Enhance care delivery with AI solutions that improve diagnosis speed and clinical decision-making. We specialize in predictive risk scoring, treatment recommendations, image-based diagnostics, and smart patient triage.

As a leading AI IoT software development company, we make devices, sensors, and physical systems smarter and more responsive. Our AI-powered IoT solutions run in real time at the edge.

We make decentralized platforms and token ecosystems smart with AI-driven solutions for crypto and Web3. Our AI-powered blockchain development company builds tools that can integrate easily with exchanges, wallets, and DAOs.

Future-Proof Generative AI Architectures Tailored to Your Enterprise

Following a rigorous evaluation of your strategic goals, we engineer and implement a bespoke Generative AI ecosystem optimized for your unique operational environment. Our approach moves beyond off-the-shelf software, ensuring the underlying infrastructure is as resilient as it is innovative.

Autonomous Systems & Model Refinement

- Intelligent AI Agent Development: We engineer autonomous digital agents tailored specifically to your internal and external workflows. These agents go beyond simple responses—they are designed to execute complex tasks, elevate engagement quality, and proactively manage service interactions with minimal supervision.

- Custom LLM Testing and Fine-Tuning: Achieve peak performance with specialized model optimization. We conduct rigorous validation and hyper-parameter tuning of large-scale language models to maximize reliability, eliminate "hallucinations" (distortions), and sharpen contextual precision for your specific industry niche.

Infrastructure Integration & Learning

- Advanced ML Integration We embed adaptive learning mechanisms directly into your core AI infrastructure. This ensures your systems don't just process data—they evolve. By implementing continuous feedback loops, we guarantee ongoing refinement and performance optimization based on real-world usage patterns.

- Seamless GPT Framework Integration: Connect the world’s most advanced GPT architectures to your existing technology stack. We facilitate deep-level API and environment integration to elevate the sophistication of your digital output, accelerate content lifecycles, and unlock new layers of creative and analytical potential.

Our Core Specializations in Generative AI Model

Architectures

As a leading AI development company, we make AI practical. Our custom AI development services help you build intelligent systems that fit your goals, integrate with your stack, and deliver real business impact.

Agentic AI & Autonomous Task Orchestration

We specialize in "Agentic" systems—AI that doesn't just respond, but actively uses tools to complete goals. Our agents can navigate APIs, manage databases, and execute multi-step workflows (like end-to-end claims processing) with independent reasoning.

Enterprise RAG (Retrieval-Augmented Generation)

We eliminate AI hallucinations by grounding models in your proprietary data. We build secure vector pipelines that allow LLMs to "read" your internal documents and databases in real-time, providing hyper-accurate, brand-specific answers.

Multi-Modal Vision & Cognitive Video Analytics

We bridge the gap between the physical and digital. Our multi-modal models analyze live video, thermal feeds, and spatial data to automate high-stakes environments like autonomous warehouses, smart retail, and surgical theaters.

On-Premise & Edge LLM Deployment

For organizations with strict data sovereignty requirements, we specialize in deploying Small Language Models (SLMs) on private infrastructure. This ensures 100% data privacy, zero latency, and significant reduction in third-party API costs.

AI Red Teaming & Governance

We provide the safety layer for enterprise AI. Our specialization includes adversarial testing to prevent prompt injections, bias mitigation, and the implementation of "Guardrail Layers" to ensure your AI remains compliant with global regulations.

Synthetic Data Generation for Model Training

When real-world data is scarce or sensitive, we develop generative models to create high-fidelity synthetic datasets. This allows you to train robust machine learning models for healthcare, finance, or simulation without compromising privacy.

Digital Twin AI & Industrial Simulation

We build AI-powered digital twins that simulate complex physical systems. By integrating real-time IoT data with predictive AI, we enable manufacturers to run "what-if" scenarios, optimizing maintenance and production cycles before a single machine moves.

Cognitive Document Intelligence (LLM-OCR)

We go beyond simple text extraction. Our document intelligence systems use LLMs to understand the meaning of complex legal contracts, medical records, and financial statements, transforming messy paperwork into structured, actionable insights.

AIOps & Self-Healing Infrastructure

We specialize in applying AI to the IT stack itself. Our AIOps solutions monitor enterprise ecosystems to predict hardware failures, automate security patching, and optimize cloud spend through autonomous resource allocation.

Our Core AI Competencies

We move beyond basic automation to deliver specialized intelligence. Here is how we categorize our technical mastery:

Data-Driven Foresight (ML)

Trust is built on accuracy. We develop machine learning models focused on statistical validation and bias reduction, ensuring your forecasts and classifications are both reliable and defensible in a high-stakes environment.

Cognitive Interpretation (NLP)

Beyond simple keywords, we build systems that respect the complexity of human communication. We prioritize contextual accuracy and data privacy, allowing you to automate sensitive workflows without losing the human nuance.

Verified Visual Intelligence (Computer Vision)

We bring "human-eye" precision to digital scale. Our systems are built for industrial-grade reliability, providing consistent monitoring and detection where there is zero margin for error.

Managed Generative Systems (GenAI)

We bridge the gap between "experimental" and "enterprise-ready." Our approach focuses on reducing hallucinations and securing your intellectual property, building custom LLM applications that stay within your brand's guardrails.

Strategic Logic Engines (Reinforcement Learning)

For complex decision-making, we provide systems that are stress-tested in simulated environments. We deliver traceable logic that optimizes your operations while prioritizing safety and long-term sustainability.

Resilient Local Intelligence (Edge AI)

Security begins at the device level. By processing data locally on the "Edge," we minimize data exposure and eliminate connectivity risks, ensuring your systems remain intelligent even in offline or high-security environments.

Not Sure Where to Start with AI?

We'll help you find the right use case, build a PoC, and scale it into production with measurable ROI.

Schedule a Free Strategy CallFrom Concept to Core Utility: Our Generative AI Milestones

Every project we undertake is rooted in a commitment to measurable ROI and operational stability. Here is how our strategic partnerships have solved critical business challenges.

Future-Ready Architecture: Our Generative AI Service Suite

We don’t just implement tools; we build resilient AI ecosystems. Our methodology ensures that your GenAI investment is secure, scalable, and deeply integrated into your core business logic.

Autonomous AI Agent Engineering

Move beyond basic chatbots. We develop goal-oriented AI agents capable of executing multi-step workflows, navigating complex customer journeys, and interacting with your internal APIs to solve problems in real-time.

Rigorous LLM Validation & Fine-Tuning

A model is only as good as its reliability. We conduct systematic stress testing and domain-specific fine-tuning to eliminate hallucinations, neutralize algorithmic bias, and ensure that every output aligns perfectly with your brand’s safety standards and expertise.

Adaptive ML Synthesis

We enhance Generative AI with the grounding of traditional Machine Learning. By integrating predictive ML components, your models don't just generate content—they adapt based on user behavior and historical data, becoming more accurate and efficient over time.

Enterprise GPT Integration

We bridge the gap between cutting-edge LLMs and your legacy infrastructure. Our integration specialists deploy the latest GPT frameworks via secure, low-latency pipelines, optimizing for speed and ensuring your proprietary data remains isolated and encrypted.

Continuous Optimization & Monitoring

The AI landscape shifts weekly. We provide the monitoring infrastructure required to track model drift, manage token costs, and update your systems as newer, more efficient architectures become available.

Generative AI Tech Stack: Enterprise-Grade Foundations

We utilize a high-performance, vetted ecosystem of tools. By selecting only the

most resilient frameworks, we ensure your Generative AI infrastructure is

scalable, secure, and fully private.

Frameworks

PyTorch

TensorFlow

JAX

Hugging Face Transformers

Platforms / Models

OpenAI GPT

Anthropic Claude

Google Gemini

Meta LLaMA

Stable Diffusion

Frameworks

OpenCV

PyTorch Vision

TensorFlow Vision

Detectron2

YOLO

Libraries

MediaPipe

Keras CV

Frameworks / Orchestration

MLflow

Kubeflow

Apache Airflow

Prefect

Cloud Platforms

AWS SageMaker

Azure Machine Learning

Google Vertex AI

Monitoring

Weights & Biases

Evidently AI

Security & Governance Tools

HashiCorp Vault

Okta

Azure Confidential Computing

AWS IAM

Google Cloud Security Command Center

Compliance & Monitoring

Microsoft Purview

Prisma Cloud

Frameworks

LangChain

LlamaIndex

Microsoft Semantic Kernel

AutoGen

CrewAI

Frameworks / Libraries

spaCy

NLTK

Hugging Face

Stanford NLP

LLM APIs

OpenAI

Anthropic

Cohere

Data Frameworks

Apache Spark

Databricks

Snowflake

BI Tools

Power BI

Tableau

Looker

Vector Databases

Pinecone

Weaviate

FAISS

Milvus

RAG Frameworks

LangChain

LlamaIndex

Industry Expertise: Engineering High-Impact Sector Solutions

Generative AI is no longer experimental; in 2026, it is the backbone of operational excellence. We deploy specialized, domain-aware models that speak the language of your industry and respect its specific guardrails.

Strengthen Your Competitive Position with On-Demand Generative AI Experts

Enterprise AI programs require deep specialization, not broad generalization. Cqlsys provides access to seasoned Generative AI professionals with proven experience delivering secure, scalable solutions in complex, high-stakes environments. We ensure every engagement is architected for performance, compliance, and measurable business impact.

The Visionaries of Reasoning: These specialists focus on the "New Stack" of AI, creating systems that understand, reason, and act with human-level nuance.

Core Proficiencies:

Generative architectures, language systems, large-scale models, prompt optimization, and retrieval-augmented (RAG) frameworks.

Strategic Applications:

Digital copilots, conversational automation, document intelligence, and semantic search platforms.

The Masters of Perception: These engineers build the "eyes and brains" of your operation, focusing on pattern recognition, computer vision, and predictive logic.

Core Proficiencies:

Forecasting models, visual analytics, temporal data modeling, and operational deployment (MLOps) pipelines.

Strategic Applications:

Demand estimation, personalization engines, quality validation, and risk modeling.

The Strategists of Insight: These experts translate raw data into competitive advantages, ensuring your models are grounded in statistical truth rather than speculation.

Core Proficiencies:

Data structuring, quantitative modeling, performance validation, and advanced feature extraction.

Strategic Applications:

Attrition modeling, audience segmentation, experimental (A/B) analysis, and strategic insights.

The Scalability Experts: The "missing link" in most AI projects. These specialists ensure your models are secure, fast, and cost-efficient at scale.

Core Proficiencies:

Vector database orchestration, GPU compute optimization, and cloud-native AI infrastructure.

Strategic Applications:

Reducing model latency, managing "model drift," and ensuring 99.9% uptime for global AI services.

Explore our engagement models, then choose the AI talent that fits your goals.

Our AI Development Lifecycle: From Concept to Production

Navigating the complexities of artificial intelligence can be challenging. We utilize a battle-tested, iterative methodology designed to mitigate risk and maximize ROI through transparent, measurable stages.

-

Discovery & Strategic Alignment

Before a single line of code is written, we define the "North Star" of the project. We analyze your data ecosystem, identify high-impact use cases, and establish the KPIs that will define success.

- ● Objective: Feasibility analysis, ROI mapping, and data privacy auditing.

- ● Output: A comprehensive AI Roadmap and Technical Blueprint.

-

Intelligent Data Acquisition

We acquire and annotate representative datasets directly tied to your defined business challenges. This ensures predictive integrity, making certain the model learns from high-fidelity, real-world information.

- ● Objective: Sourcing, labeling, and synthetic data generation (if required).

- ● Output: A curated, high-integrity data warehouse.

-

Data Refinement & Preparation

Raw data is rarely production-ready. We refine and structure datasets to eliminate inconsistencies, remove bias, and enhance modeling quality. This stage is the "quality control" that prevents garbage-in, garbage-out scenarios.

- ● Normalization, deduplication, and feature engineering.

- ● A finalized, model-ready dataset.

-

High-Performance Model Training

Our engineers select the optimal frameworks and architectures (LLMs, Vision Transformers, or Graph Neural Networks) and train them against rigorous performance benchmarks to ensure absolute reliability.

- ● Objective: Hyperparameter tuning, loss function optimization, and cross-validation.

- ● Output: A high-accuracy model prototype.

-

Seamless Model Deployment

We integrate validated systems within your operational environments to maximize process synergy. Whether on-premise, in a private cloud, or at the edge, we ensure the AI fits into your existing tech stack.

- ● Objective: Containerization (Docker/Kubernetes), API development, and latency optimization.

- ● Output: A live, functional AI solution.

-

Rigorous Solution Testing & MLOps

We conduct comprehensive verification to ensure stability, performance accuracy, and uninterrupted operation. We don't just "deploy and leave"—we monitor for "model drift" and ensure the system evolves as your data does.

- ● Objective: Stress testing, security "Red Teaming," and real-time monitoring.

- ● Output: A resilient, self-improving AI ecosystem.

Sector Mastery: Architecting Domain-Specific Intelligence

We operate on the principle that while AI is universal, its application must be

surgical. Success requires more than a "one-size-fits-all" approach; it demands

high-integrity, bespoke engineering designed to navigate the exact complexities

of your industry.

Private AI systems for automated underwriting,

fraud detection, and compliant reporting.

AI-driven grid optimization and automated ESG reporting.

Low-latency, self-healing network intelligence for

next-gen connectivity.

Cybersecurity & Defense – Autonomous AI agents that detect and neutralize

advanced threats.

AI for drug discovery, clinical intelligence, and

compliant automation.

Real-time disruption simulation and autonomous

routing optimization.

AI shopping agents delivering hyper-personalized

commerce experiences.

Intelligent vehicle copilots for predictive maintenance and energy

optimization.

AI-powered defect detection and smart production diagnostics.

Real Estate & Construction – Automated valuation, lease abstraction, and 3D

property modeling.

AI-based underwriting, claims automation, and risk assessment.

Travel & Hospitality – Real-time personalized itineraries and AI-powered

concierge systems.

Generative content creation and automated

localization at scale.

AI-driven document review, redlining, and litigation support.

Adaptive AI tutors for personalized, lifelong learning.

Secure AI systems for citizen services and workflow automation.

AI monitoring for safety, mapping, and operational optimization.

Autonomous navigation and mission-critical AI systems.

Precision farming and climate-resilient crop intelligence.

AI-powered grant research and personalized donor engagement.

Our Strategic Alliances: Powering Enterprise-Grade AI

We have forged deep technical partnerships with globally recognized cloud and enterprise technology leaders to deliver robust, high-performance AI applications. These alliances empower us to provide the scalable infrastructure and advanced toolsets necessary for long-term industrial success.

Cloud Infrastructure & Compute Leaders

Through our partnerships with major hyperscalers, we provide the raw GPU power and serverless architectures required for massive-scale inference and training.

Capabilities

- Auto-scaling compute clusters

- Low-latency edge processing

- Global deployment footprints

Key Partners

AWS

Azure

Google Cloud

Sovereign Data & Security Frameworks

Security is the foundation of our partnership strategy ensuring every AI deployment meets the highest compliance standards.

Capabilities

- End-to-end encryption

- Private-tenant model hosting

- Automated compliance auditing

Key Partners

NVIDIA

Databricks

Snowflake

Model Orchestration & Vector Intelligence

Powering your AI reasoning layer with modern retrieval systems and orchestration engines.

Capabilities

- High-speed semantic search

- Real-time data ingestion

- Seamless LLM orchestration

Key Partners

Pinecone

MongoDB

LangChain

Hugging Face

Enterprise Integration & MLOps

Native integration into your enterprise ecosystem with production-ready monitoring and deployment pipelines.

Capabilities

- Seamless API connectivity

- CI/CD for machine learning

- Real-time performance monitoring

Key Partners

Weights & Biases

Salesforce

SAP

Oracle

Why Choose Cqlsys for Generative AI Development & Services

Advanced AI initiatives demand precision engineering, deep domain understanding, and execution discipline. At Cqlsys, we move beyond the hype of Generative AI to deliver high-performance, enterprise-grade solutions that are strictly aligned with your strategic business objectives.

Frequently Asked Questions: Generative AI with Cqlsys

While both customize LLM behavior, the choice depends on the dynamic nature of your data.

RAG (Retrieval-Augmented Generation): Best for data that changes frequently (e.g., real-time inventory, documentation). It retrieves external context at runtime without altering the model.

Fine-Tuning: Best for teaching the model a specific style, format, or niche terminology (e.g., medical jargon or proprietary coding styles). It modifies the actual weights of the model.

The CQLsys Approach: We often recommend a hybrid strategy—Fine-tuning for domain-specific "etiquette" and RAG for factual accuracy.

Standard SQL databases aren't built for "semantic similarity." A Vector Database (like Pinecone, Milvus, or Weaviate) stores data as high-dimensional embeddings—numerical representations of meaning. When a user asks a question, the system converts that query into a vector and finds the mathematically "closest" data points to provide as context to the LLM.

Standard SQL databases aren't built for "semantic similarity." A Vector Database (like Pinecone, Milvus, or Weaviate) stores data as high-dimensional embeddings—numerical representations of meaning. When a user asks a question, the system converts that query into a vector and finds the mathematically "closest" data points to provide as context to the LLM.

- Grounding: Forcing the model to cite specific sources via RAG.

- Temperature Control: Lowering the temperature parameter (e.g., to 0.1 or 0.2) to make the model more deterministic and less "creative."

- Verification Chains: Implementing a "Self-Check" agent that reviews the output against the retrieved context before displaying it to the user.

It’s more than just asking a question. Technical prompt engineering involves:

- Few-Shot Prompting: Providing 2–3 examples of the desired input/output within the prompt.

- Chain-of-Thought (CoT): Instructing the model to "think step-by-step" to improve reasoning in complex logic or math tasks.

- System Instructions: Defining the model's persona and constraints at the API level (e.g., "You are a senior DevOps engineer; never provide Python code, only Bash scripts.").

We prioritize Data Residency and Anonymization

- Private VPCs: Deploying models within your private cloud (AWS/Azure) so data never leaves your perimeter.

- PII Masking: Using automated pipelines to scrub Personally Identifiable Information (PII) before it reaches the LLM.

- Zero-Data Retention: Utilizing Enterprise API agreements that guarantee your data is not used for future model training.

Traditional GenAI is linear (Input → Output). Agentic workflows allow the AI to use "tools." For example, if an AI agent needs to check a flight status, it doesn't "guess"; it recognizes it needs more info, calls an external API, parses the JSON, and then formulates its response.

Large models (like Llama 3 or GPT-4) are compute-heavy. Quantization reduces the precision of the model's weights (e.g., from 16-bit to 4-bit). This drastically lowers memory usage and speeds up inference with minimal loss in accuracy, allowing high-performance models to run on more affordable hardware.

Standard metrics like "Accuracy" are hard for text. We use:

- BLEU/ROUGE: For comparing generated text to a reference (useful for summarization)

- Faithfulness/Relevancy: Specifically for RAG, measuring if the answer matches the retrieved context.

- Latency & Throughput: Measuring Tokens Per Second (TPS) to ensure the UI feels responsive to users.

- Encoder-only (e.g., BERT): Excellent for understanding relationships and classifying text (Sentiment analysis, Named Entity Recognition).

- Decoder-only (e.g., GPT series): Optimized for generating the next token in a sequence, making them the standard for "Generative" tasks.

- Encoder-Decoder (e.g., T5): Great for translation or summarization where you transform one sequence into another.

Absolutely. At CQLsys, we integrate GenAI into the Software Development Life Cycle by:

- Automated Unit Testing: Generating test cases based on function logic.

- CI/CD Documentation: Automatically updating READMEs and API docs whenever code is pushed.

- Legacy Refactoring: Using LLMs to translate old COBOL or Java code into modern Microservices architectures.